AI that does more

with less waste.

IgnisPrompt handles routine AI tasks locally — summarizing, drafting, extracting — and only escalates to the cloud when truly needed. Then it shows you the impact, like a health app for your AI footprint.

Most AI work doesn't need premium compute

Summarizing a short email. Extracting dates from a message. Drafting a quick reply. These are routine tasks — but they still trigger expensive, energy-intensive large model calls.

AI systems treat every request like it's complex

Today's AI assistants send everything to large language models, even when the task is simple. A request to "summarize this 3-sentence email" gets the same compute power as "write a comprehensive research report." That's like using a semi-truck to deliver a letter.

The result: unnecessary latency, inflated costs, and wasted infrastructure capacity. At scale, these inefficiencies add up to real operational burden and environmental impact.

Even routine interactions carry cost at scale. OpenAI's CEO publicly acknowledged that seemingly trivial patterns — like polite exchanges — cost tens of millions of dollars annually across their infrastructure.

The solution isn't to shame users or restrict features. It's to route smarter: handle lightweight work close to the user, escalate only when necessary, and measure the results.

Local-first routing for routine AI tasks

IgnisPrompt sits between your UI and your models. It detects what the user needs, routes routine work to small local models, and escalates complex tasks to the cloud. Then it shows you exactly what happened.

Lower costs

Fewer unnecessary large-model calls means lower inference spend. Handle summaries, extraction, and simple drafting locally — save cloud calls for work that truly needs them.

Faster responses

Local models respond instantly — no network latency. Routine requests feel immediate. Complex work still uses cloud power when needed.

Measurable impact

Every routing decision is logged. See how many local vs cloud calls, latency improvements, and estimated emissions savings. Transparent methodology, no greenwashing.

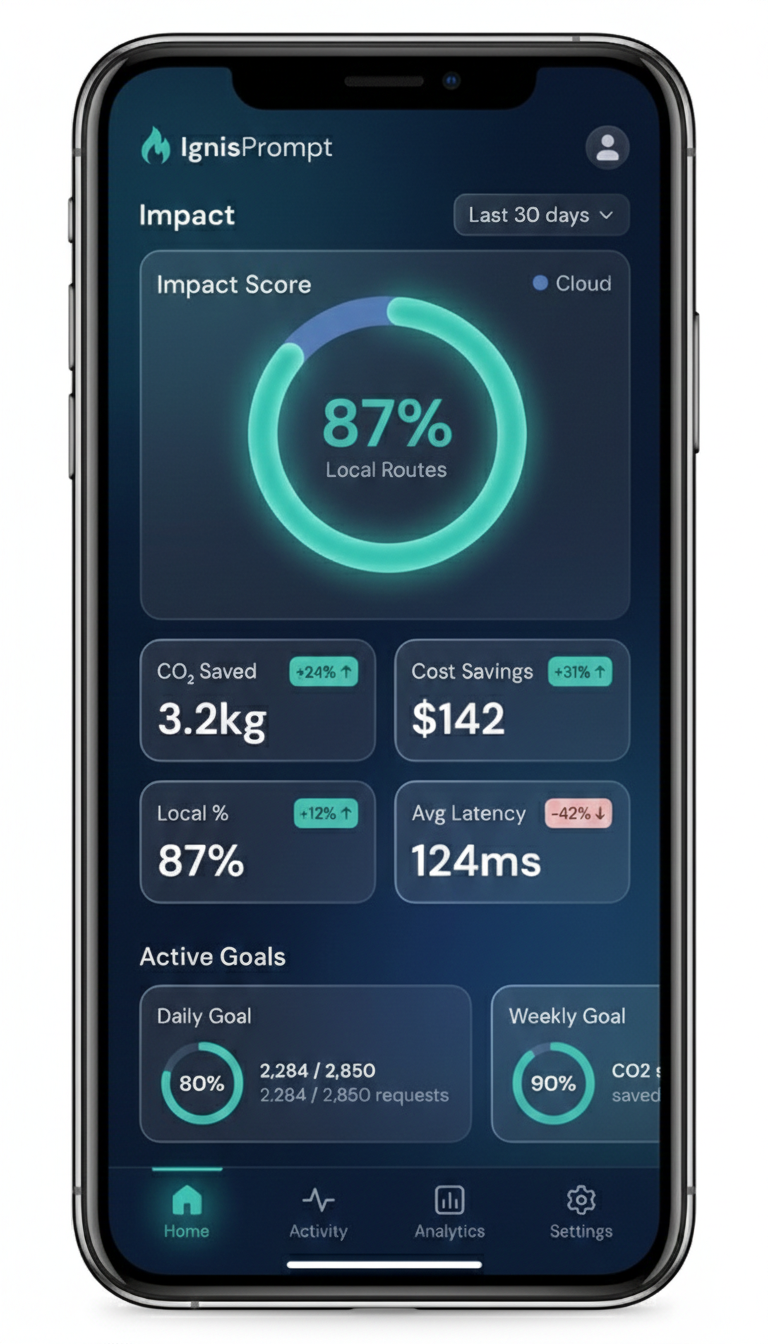

Your personal impact dashboard

Like a health app that tracks your steps and calories, IgnisPrompt tracks your contribution to reducing AI compute waste. See your daily route decisions, cumulative savings, and progress toward sustainability goals.

What you'll see: Percentage of requests handled locally, latency improvements, estimated cost savings, and carbon footprint reduction based on transparent assumptions (grid intensity, data center efficiency).

It's not about guilt or virtue signaling — it's about seeing the real impact of smarter routing, visualized in a way that feels rewarding.

Teams shipping AI to millions

If you're building AI products at scale, IgnisPrompt helps you ship faster, cheaper, and more responsibly.

AI Platforms

Offer routing as a capability to your customers. Let them set cost caps, latency tiers, and sustainability preferences — differentiate on efficiency, not just model quality.

Enterprise Dev Teams

Predictable costs, better performance, and built-in compliance routing. Keep sensitive data local, route public requests to cloud LLMs. Full instrumentation and observability.

Sustainability Leaders

Transparent emissions methodology with published assumptions. Export reports for ESG disclosure. Real measurement, not marketing spin.